How to Create a Responsible AI Policy for Non-Profits: 5-Pillar Framework

En bref

Step-by-step guide to building a responsible AI charter for non-profit organizations. Covers 5 key pillars — environment, inclusion, ethics, transparency, and innovation — with energy consumption data and a free downloadable template (CC BY 4.0).

A Charter Designed for the BeEducation Network

In spring 2025, I had the pleasure of leading a workshop organized by BeEducation, a network of initiatives and associations committed to improving the school system in Belgium. Created in 2019, this network brings together about forty active members in education in the Wallonia-Brussels Federation, fighting against educational inequalities, dropout rates, and harassment, while promoting inclusion and responsible citizenship.

The workshop’s objective: help participants — coordinators, project managers, and communications officers from non-profits in the education sector — understand the opportunities and challenges of responsible artificial intelligence use.

For this workshop, I designed an AI Usage Charter for the BeEducation network, a practical document that I’m making available under an open license (Creative Commons CC BY 4.0) for any organization wishing to adapt it to their own needs.

📄 Responsible AI Usage Charter

Editable Word document · CC BY 4.0 License

Download

Facing the Omnipresence of AI: Choosing Appropriation Over Rejection

Whether we like it or not, artificial intelligence is now embedded in most of our daily tools: search engines, office suites, messaging platforms, collaborative platforms. Faced with this reality, two attitudes are possible: wholesale rejection, or critical appropriation.

I chose the second path — and that’s what I encourage in my consulting work. Not out of blind technophile enthusiasm, but out of pragmatism: non-profits, often constrained by limited human and financial resources, can find valuable support in these tools to accomplish their mission.

In an ideal world, the social sector would benefit from public support proportionate to its societal impact. Meanwhile, we must make do with available means — including technological ones — while maintaining a clear-eyed view of their limitations and risks.

This is precisely the objective of this charter: to provide a framework for using AI thoughtfully, without naivety or systematic rejection.

💡 Historical milestones: AI before ChatGPT

- 1950 — Alan Turing publishes Computing Machinery and Intelligence and proposes the Turing Test

- 1956 — Dartmouth Conference: the term “artificial intelligence” is formalized

- 1958 — Frank Rosenblatt designs the Perceptron, precursor of neural networks

- 1966 — ELIZA, first conversational agent, simulates therapeutic dialogue

- 1970-80 — Rise of expert systems (MYCIN), followed by an “AI winter” (funding reduction)

- 1990s — Banking fraud detection, spam filters, assisted radiology diagnosis

- 2000s — Predictive GPS, personalized recommendations (Netflix), automatic translation (Google Translate, 2006)

- 2010s — Voice assistants (Siri), facial recognition, accessibility (automatic subtitling, smart screen readers)

- 2017 — Early detection of diabetic retinopathy and skin cancer through deep learning

- 2022+ — Generative AI (ChatGPT, Claude, Mistral) accessible to the general public

Artificial intelligence actually encompasses several distinct families: predictive AI (anticipating behavior, trends), prescriptive AI (recommending optimal action), and generative AI (producing new content). It’s the latter, powered by large language models (LLMs), that triggered the 2022 shift: for the first time, capabilities previously reserved for specialists became accessible to the general public — and it’s precisely this democratization that now crystallizes ethical, environmental, and societal debates.

AI: A Social Impact Amplifier for Non-Profits

When used well, artificial intelligence can become a real lever for non-profit organizations. It reduces repetitive tasks, strengthens certain skills, and above all — for often small teams — frees up time for what matters: the social mission.

7 Concrete Usage Categories

During the workshop, we explored seven major application families of AI for non-profits:

Content generation: assisted writing (grant applications, activity reports, newsletters), editorial calendar creation, translation, and SEO optimization.

Productivity and collaboration: automatic meeting transcription, summary and action item generation, project management assistance.

Data analysis and processing: help with complex Excel formulas, automatic visualization, anomaly detection, and tracking reports.

Stakeholder relations: automate frequent responses and follow-up (funders, donors, volunteers) to free up time for high-value human exchanges.

Smart documents: querying large documents (regulations, contracts, studies), automatic classification, internal search engine.

Research and strategic monitoring: project calls and funding opportunities tracking, sector monitoring, scientific study synthesis.

Custom automation (advanced level): AI integration into existing tools (CRM, ERP, management platform), automated workflow creation for recurring tasks, sector monitoring with personalized alerts.

The 7 AI usage categories identified during the BeEducation workshop

Why Is a Charter Necessary?

Choosing Your Uses: Not All Tasks Have the Same Cost

Even within generative AI, environmental impact gaps are considerable. Generating text, analyzing an image, or producing a video don’t mobilize the same resources — far from it.

For a non-profit, the choice is therefore not “AI or no AI,” but “which task, for what mission gain?”

The Proportionality Principle

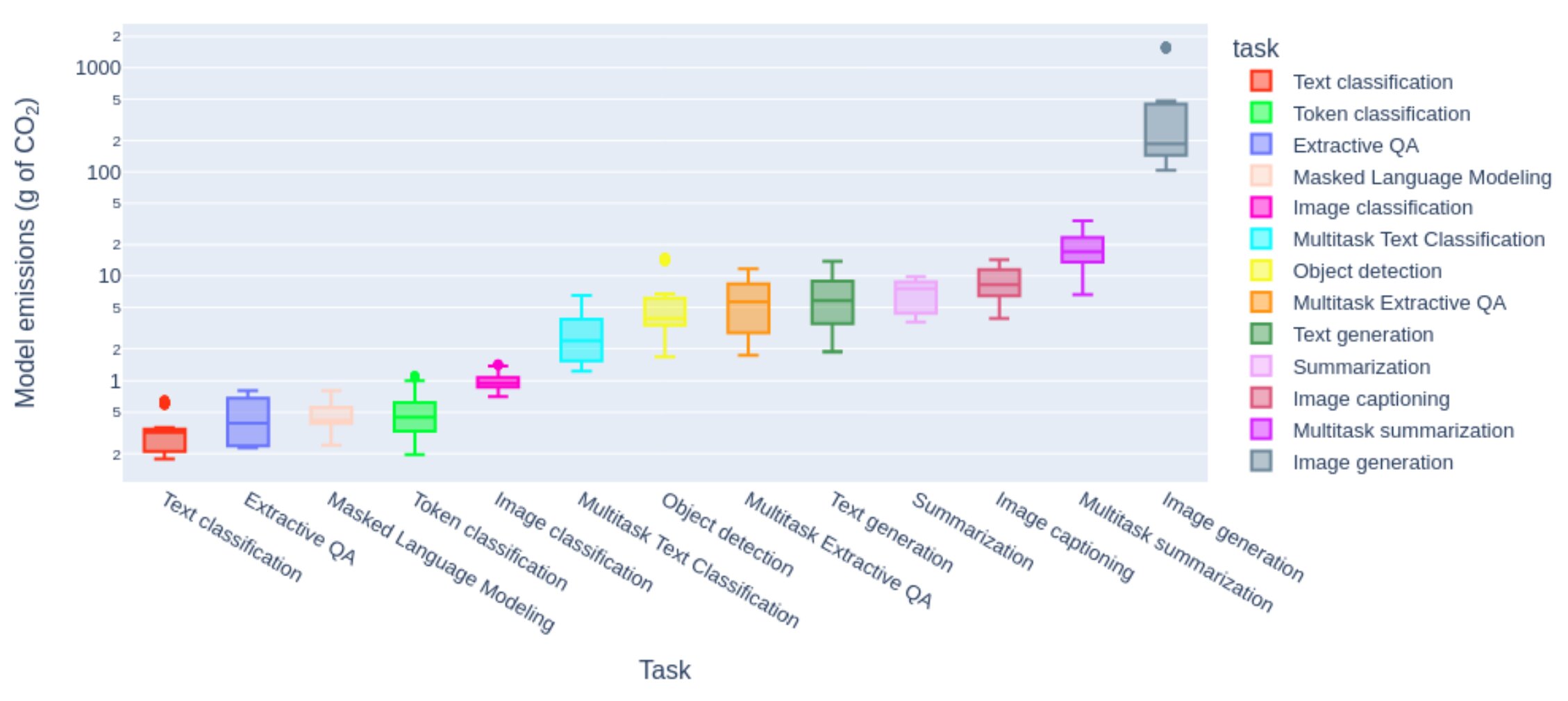

According to the study by Luccioni et al. (2023) on AI models’ energy consumption, the gap between tasks is considerable:

Source: Luccioni et al. (2023), “Power Hungry Processing: Watts Driving the Cost of AI Deployment?”

| Usage | Relative Cost | Recommendation |

|---|---|---|

| Text (summary, sorting, response) | 1× | Reasoned use, without excess |

| Image analysis | 10–50× | With intention |

| Image generation | 100–1,000× | Avoid except justified exception |

| Video generation | 1,000–10,000× | Prohibit in most cases |

The order of magnitude matters: these energy gaps vary considerably depending on models, infrastructure, and resolution, but remain significant. Image generation consumes 100 to 1000 times more energy than a text task, and video multiplies this gap further.

Not All Models Are Equal

Beyond task type, model choice matters. Using a frontier model (the most powerful and energy-intensive on the market) for an elementary task — summarizing an email, rephrasing a sentence — constitutes a significant disproportion between deployed power and actual need.

| Category | Examples | Consumption |

|---|---|---|

| Compact (1-3B parameters) | Llama 3.2 1B, Phi-3-mini | 1× (reference) |

| Light (7-8B parameters) | Mistral 7B, GPT-4o mini, Claude Haiku | 4-6× |

| Medium (70B parameters) | Llama 3.3 70B | 20-30× |

| Frontier (>100B parameters) | GPT-5, Claude Opus, Mistral Large, Llama 405B | 50-100×+ |

💡 Good news: performant lightweight models

Thanks to distillation and quantization techniques, lightweight models now achieve 80-90% of heavy model performance. A meeting summary, a rephrasing, a translation? Lightweight models like GPT-4o mini or Claude Haiku are largely sufficient — for 10 to 50 times less energy.

Sources: Samsi et al., AI Energy Score, Stanford AI Index 2025. These orders of magnitude can vary by a factor of 10 depending on hardware and context.

What This Means for Your Organization

For a non-profit, the responsible strategy is not to flee AI, but to proportion usage to real added value:

- Text → relevant use when it frees up time for the mission: summaries, rephrasing, writing assistance

- Image → reserve for high-impact communications, when a free image bank isn’t sufficient

- Generated video → prohibit except exceptional cases where no alternative exists

This logic joins a principle familiar to mission-driven organizations: concentrate resources where they multiply action, not where they impress.

🧭 A simple test before each use

Does this AI use free up human time? Improve access or service quality? Without excluding part of the audience?

If no answer is positive, the use is not a priority.

Challenges Not to Be Overlooked

Beyond the environment, other issues deserve our vigilance:

Disinformation takes on new dimensions with deepfakes and unverified generated content. The ease of producing fake content — texts, images, cloned voices — makes source verification more crucial than ever, particularly for organizations whose credibility is essential capital.

The digital divide remains concerning. In Belgium, 40% of the population is in a situation of digital vulnerability according to the Digital Inclusion Barometer 2024 of the King Baudouin Foundation. Despite progress (46% in 2021), inequalities persist: 54% of job seekers and 42% of single-parent families struggle to access digital services. A non-profit that digitalizes its services must ensure not to exclude part of its audience.

Algorithmic biases are not theoretical abstractions. In 2018, Amazon had to abandon a recruitment tool that systematically penalized female CVs — the algorithm had learned biases present in ten years of historical data. For a non-profit working with vulnerable populations, using AI without critical questioning amounts to potentially reproducing the discrimination it fights.

Data protection also calls for increased vigilance. GDPR imposes strict rules, but generative AIs raise new questions: where does data entered in a prompt go? How to guarantee confidentiality of beneficiary information?

A cardinal principle: AI can assist, never decide alone when direct impact on people is at stake (orientation, selection, evaluation). This principle is enshrined in the European AI Regulation (Art. 14) and GDPR (Art. 22).

These issues led us, with workshop participants, to design a structuring framework.

The Five Pillars of the Charter

The charter is structured around five fundamental commitments, inspired by the format of the Responsible Digital Charter of the Institute for Responsible Digital.

1. Environment: Sober and ecologically responsible use

Favor classic search engines for simple queries

Reserve AI for situations where it brings real added value

- Formulate precise and targeted queries

Restrict very energy-intensive uses (image, video generation) to projects with strong social value

For advanced users: measure carbon footprint via

EcoLogits Calculator

or favor lightweight open-source models (e.g., Mistral 7B)

2. Accessibility and inclusion: Leave no one behind

Ensure our AI uses don’t reinforce existing digital divides

Train teams in informed, critical, and responsible use

Ensure generated content remains accessible to people with disabilities

- Mobilize AI as a tool for accessibility and social inclusion

3. Ethics and governance: Responsible practices

- Respect GDPR and anonymize sensitive data

- Remain vigilant against algorithmic biases

Clearly define what can be delegated to AI and what must remain human

- Systematically verify generated content before dissemination

4. Transparency and trust: Communicate about uses

Clearly inform when AI is used in content or services

- Explain tool choices and their implications

- Share learnings with other sector actors

Implement feedback mechanisms in case of problematic use

5. Responsible innovation: Human-centered

- Explore applications that strengthen real social impact

- Regularly evaluate the effective added value of tools used

Keep humans at the center: AI remains a tool, never a substitute for commitment

Contribute to collective reflections on ethical AI framing

A Living Document, to Adapt to Your Needs

This charter is not set in stone. It’s meant to be a starting point, a reflection framework that each organization can and should adapt to its own reality: its mission, its audiences, its means, its level of digital maturity.

The Word format is deliberately chosen to facilitate this appropriation. You can freely:

- Modify commitments according to your priorities

- Add elements specific to your sector of activity

- Remove “advanced” points if your organization is starting out

- Complete with your own best practices

Acknowledgments

I warmly thank the BeEducation team for organizing this workshop and the quality of exchanges with participants. It’s from this collective intelligence that this charter was born.

Note: the structuring of this web page benefited from the assistance of Claude Code from Anthropic, and documentary research was facilitated by Perplexity.

Additional Resources

To go further on environmental issues of digital and AI:

- The carbon footprint of digital: comparable to civil aviation

- Your computer weighs 800 kg: the hidden impact of our devices

- Responsible Digital Charter (INR)

- EcoLogits Calculator

- Framamia - Understanding AI to demystify it

What Now?

If this charter inspires you, you can:

- Adapt it to your organization and share it with your team

- Disseminate it to other non-profits in your network

- Contribute to its improvement by sharing your feedback

This article reflects the state of knowledge in January 2026. The field evolving rapidly, it will be updated regularly.